As a part of our work on AcousticBrainz in the Music Technology Group, last year we ran a contest to see if we could build a better system for automatically identifying the genre of items in AcousticBrainz.

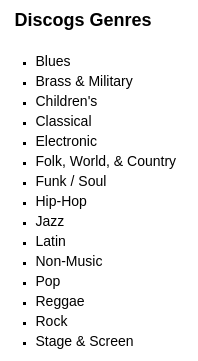

We collected genre annotations for a large subset of AcousticBrainz from four different sources. This dataset is interesting because it contains different levels of annotations (Genres, subgenres, styles), and also a much larger range of labels (The datasets that we have in AcousticBrainz currently include about 10 different genres [“labels”], but the data that we collected has hundreds).

Part of our interest in running this contest is to see if people can come up with new ways of understanding, comparing, and combining the data that represents the different ways that people talk about music.

If you are interested in machine learning then we invite you to participate in the contest. Successful participants will be invited to present their work in Antibes, France in October.

You can find more information on the contest website: https://multimediaeval.github.io/2018-AcousticBrainz-Genre-Task/

I’ve also included a full copy of our call for participants below.

Dear all,

We are pleased to announce the AcousticBrainz genre recognition task held as part of MediaEval 2018. The Benchmarking Initiative for Multimedia Evaluation (MediaEval) organizes an annual cycle of scientific evaluation tasks in the area of multimedia access and retrieval.

Our task is focused on content-based music genre recognition using genre annotations from multiple sources and large-scale music features data available in the AcousticBrainz database (https://acousticbrainz.org). In particular, we want to explore how the same music pieces can be annotated differently by different communities following different genre taxonomies, and how this should be addressed by content-based genre recognition systems.

The task invites participants to predict genre and subgenre of unknown music recordings (songs) given automatically computed features of those recordings. We provide a training set of such audio features taken from the AcousticBrainz database and genre and subgenre labels from four different music metadata websites. The taxonomies that we provide for each website vary in their specificity and breadth. Each source has its own definition for its genre labels meaning that these labels may be different between sources. Participants must train model(s) using this data and then generate predictions for a test set. Participants can choose to consider each set of genre annotations individually or take an advantage of combining sources together.

A full description of the task is available here:

All interested researchers are warmly welcomed to participate. Participants will be able to present their results at the MediaEval Multimedia Benchmark Workshop, 29-31 October 2018 at Sophia Antipolis, France: http://www.multimediaeval.org/mediaeval2018/

Feel free to contact us with any questions or suggestions.

The task is organized by Music Technology Group, Universitat Pompeu Fabra (Dmitry Bogdanov, Alastair Porter), Multimedia Computing Group, Delft University of Technology (Julian Urbano), and Tagtraum Industries Incorporated (Hendrik Schreiber).

)

)